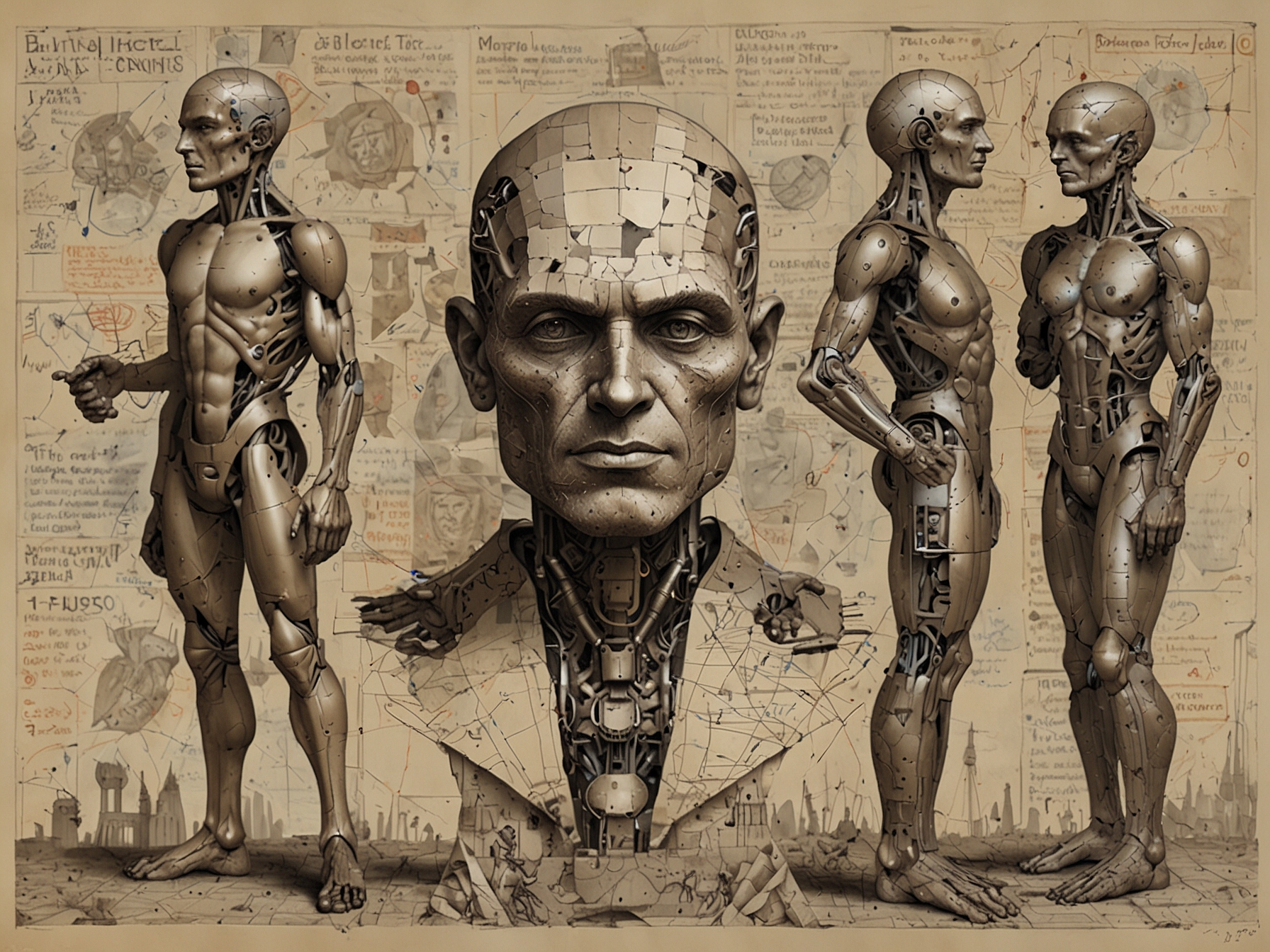

Artificial Intelligence (AI) is not just a modern marvel; its origins trace back centuries. The concept of inanimate objects coming to life as intelligent beings has been a staple of folklore, myth, and dream long before it became a reality through technological advancements.

© FNEWS.AI – Images created and owned by Fnews.AI, any use beyond the permitted scope requires written consent from Fnews.AI

The roots of AI can be found in Greek mythology where mechanical men like Talos and the bronze automaton Hephaestus were introduced. These early imaginings laid the groundwork for future generations to ponder a world where machines could think and act autonomously.

In the 20th century, foundational work in AI began to take shape through the mathematician Alan Turing, who proposed the idea of a ‘universal machine’ that could perform any calculation or task it was programmed to do. Turing’s work led to the creation of the Turing Test, a benchmark to measure a machine’s ability to exhibit intelligent behavior equivalent to that of a human.

© FNEWS.AI – Images created and owned by Fnews.AI, any use beyond the permitted scope requires written consent from Fnews.AI

The decade of the 1950s marked significant milestones. The term ‘artificial intelligence’ was officially coined by John McCarthy during the Dartmouth Conference in 1956. This conference is often considered the birth of AI as an academic field of study. Early efforts focused on simple tasks like playing chess and solving rudimentary problems.

AI experienced a series of booms and busts, often referred to as ‘AI winters’, periods where high expectations were met with slow progress due to technological limitations. Despite these hurdles, the field continued to evolve with the advent of machine learning algorithms in the 1980s and 1990s, leading to significant breakthroughs.

One of the most extraordinary breakthroughs occurred in the 1997 chess match between IBM’s Deep Blue and chess grandmaster Garry Kasparov. Deep Blue’s victory was a monumental moment, proving that machines could outperform humans in complex tasks.

As the 21st century dawned, AI advanced rapidly due to the exponential growth in computational power and data availability. The development of algorithms capable of learning and making decisions without explicit programming marked the advent of modern AI. Machine learning and, more specifically, deep learning revolutionized fields ranging from healthcare to finance.

Today, AI is integrated into daily life, from voice-activated assistants like Siri and Alexa to sophisticated recommendation engines on platforms like Netflix and Amazon. AI’s role in the medical field is expanding, assisting in diagnostics, treatment plans, and personalized medicine.

Looking towards the future, AI holds promise and potential to transform industries on an even grander scale. Self-driving cars, advanced robotics, and AI-driven climate modeling are just a few areas where AI research is breaking new ground.

Ethical considerations and regulatory frameworks are becoming crucial as AI’s capabilities grow. Issues such as data privacy, bias in algorithms, and the potential for job displacement must be addressed proactively. Thought leaders in AI stress the importance of creating ‘explainable AI’ systems that can provide transparency in decision-making processes.

Quantum computing represents the next frontier for AI, promising to solve complex problems that are currently infeasible due to computational limitations. The fusion of AI and quantum computing could herald a new era of innovation across various scientific and industrial fields.

In summary, the journey of AI from ancient myths to contemporary breakthroughs showcases human ingenuity and the relentless pursuit of knowledge. As we look to the future, understanding the historical milestones of AI provides invaluable insights into its transformative potential, guiding us towards advancements that could reshape our world.

Was this content helpful to you?